School & test engagement

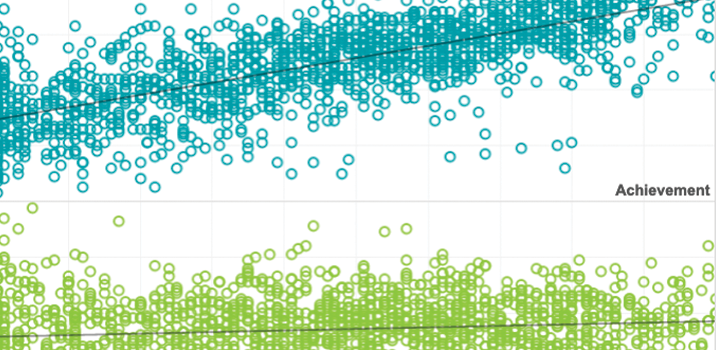

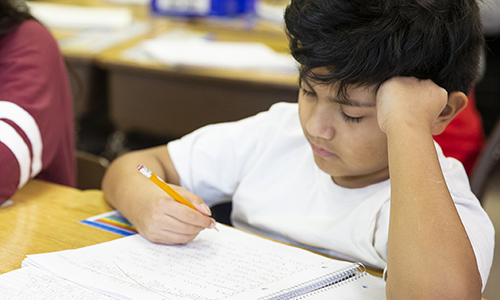

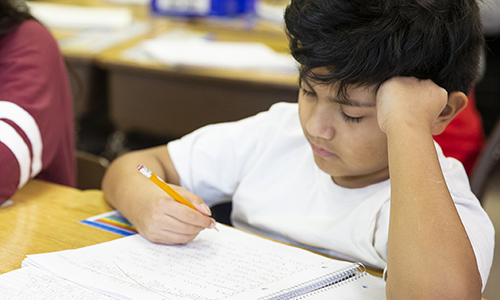

Educators need accurate assessment data to help students learn. But when students rapid-guess or otherwise disengage on tests the validity of scores can be affected. Our research examines the causes of test disengagement, how it relates to students’ overall academic engagement, and its impacts on individual test scores. We look at its effects on aggregated metrics used for school and teacher evaluations, achievement gap studies, and more. This research also explores better ways to measure and improve engagement and to help ensure that test scores more accurately reflect what students know and can do.

Validation and applications of rapid guessing to detect test taker disengagement

The International Association for the Evaluation of Educational Achievement (IEA) together with the Leibniz Institute for Research and Information in Education (DIPF) and the Centre for International Student Assessment (ZIB) offer an invitation to a three-day workshop on analyzing log file and process data from international large-scale assessments in education. The event will take place as an interactive webinar and online video conference on June 17th – 19th 2020.

By: Steven Wise

Topics: School & test engagement

The impact of test-taking disengagement on item content representation

Rapid-guessing can distort test scores and adversely affect measurement. New research shows how disengaged responses can also distort content representation.

By: Steven Wise

Topics: Measurement & scaling, Innovations in reporting & assessment, School & test engagement

Looking back: how prior-year attendance impacts starting achievement

This research uses interim assessment test results to measure the impact of prior year attendance on starting achievement the following year. Results show the impacts are significant and persistent.

By: Shannon Bi, Emily Wolk

Topics: School & test engagement, Student growth & accountability policies

An intelligent CAT that can deal with disengaged test taking

This book presents varied applications of artificial intelligence (AI) in test development, including research and successful examples of using AI technology in automated item generation, automated test assembly, automated scoring, and computerized adaptive testing.

By: Steven Wise

Topics: Measurement & scaling, Innovations in reporting & assessment, School & test engagement

A cessation of measurement: Identifying test taker disengagement using response time

This chapter explores both what happens when test takers disengage and how this disengagement should be managed during scoring.

By: Steven Wise, Megan Kuhfeld

Topics: School & test engagement

Using retest data to evaluate and improve effort-moderated scoring

This study investigated effort‐moderated (E‐M) scoring, in which item responses classified as rapid guesses are identified and excluded from scoring, and its affect on score distortion from disengaged test taking.

By: Steven Wise, Megan Kuhfeld

Topics: Measurement & scaling, Innovations in reporting & assessment, School & test engagement

Parameter estimation accuracy of the effort-moderated IRT model under multiple assumption violations

This session from the National Council on Measurement in Education 2020 virtual conference presents new research findings on understanding and managing test-taking disengagement.

By: James Soland, Joseph Rios