Innovations in reporting & assessment

Emerging technologies allow for a variety of methods to assess students and report data specific to the needs of different stakeholders. These various approaches can result in assessments that are more engaging for students, along with reporting that provides more insightful, useful information for students, families, and educators.

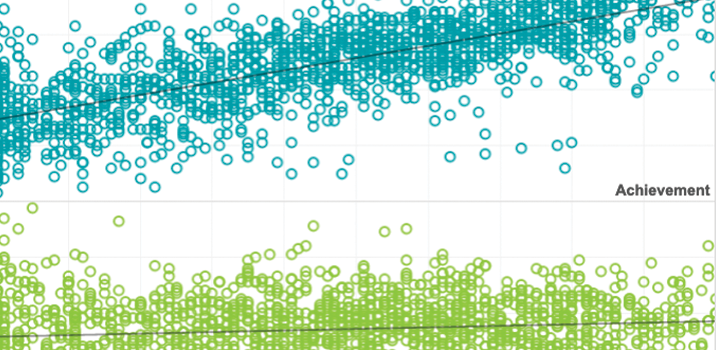

Rapid‐guessing behavior: Its identification, interpretation, and implications

The rise of computer‐based testing has brought with it the capability to measure more aspects of a test event than simply the answers selected or constructed by the test taker. One behavior that has drawn much research interest is the time test takers spend responding to individual multiple‐choice items.

By: Steven Wise

Topics: Measurement & scaling, Innovations in reporting & assessment, School & test engagement

A general approach to measuring test-taking effort on computer-based tests

The current study outlines a general process for measuring item-level effort that can be applied to an expanded set of item types and test-taking behaviors (such as omitted or constructed responses). This process, which is illustrated with data from a large-scale assessment program, should improve our ability to detect non-effortful test taking and perform individual score validation.

By: Steven Wise, Lingyun Gao

Topics: Measurement & scaling, Innovations in reporting & assessment, Student growth & accountability policies

This manuscript reports results from two studies conducted during the development of KinderTEK, an iPad delivered kindergarten mathematics intervention, to determine the relationship between instructor-reported technology experience and intervention implementation, as measured by student use.

By: Lina Shanley, Mari Strand Cary, Ben Clarke, Meg Guerreiro, Michael Thier

Design Challenge winner: Student assessment engagement

In this CASEL Measuring SEL blog, James Soland shares how work with Santa Ana Unified School District led to new insights on how item response times and test metadata may provide insight into student SEL.

By: James Soland

Topics: School & test engagement, Innovations in reporting & assessment, Social-emotional learning

When computer-based tests are used, disengagement can be detected through occurrences of rapid-guessing behavior. This empirical study investigated the impact of a new effort monitoring feature that can detect rapid guessing, as it occurs, and notify proctors that a test taker has become disengaged.

By: Steven Wise, Megan Kuhfeld, James Soland

Topics: Measurement & scaling, Innovations in reporting & assessment, School & test engagement

This paper briefly discusses the trade-offs involved in making such a transition, and then focuses on a relatively unexplored benefit of computer-based tests – the control of construct-irrelevant factors that can threaten test score validity.

By: Steven Wise

Topics: Measurement & scaling, Innovations in reporting & assessment, School & test engagement

Learning styles: Considerations for technology enhanced item design: Learning styles

Learning styles (LS) have been used for classifying students by their preferences relative to taking information in, processing it and demonstrating their ability in the context of education. This paper investigates the role of LS in K-12 education by considering the manner in which student LS are assessed and the extent to which they have informed K-12 instruction.

By: Deborah Adkins, Meg Guerreiro

Topics: Innovations in reporting & assessment, Empowering educators