Book

An intelligent CAT that can deal with disengaged test taking

Wise, S. (2020). An intelligent CAT that can deal with disengaged test taking. In H. Jiao & R. W. Lissitz (Eds), Application of artificial intelligence to assessment (pp. 161-174). Information Age Publishing.

June 2020

By: Steven Wise

Description

This book presents varied applications of artificial intelligence (AI) in test development, including research and successful examples of using AI technology in automated item generation, automated test assembly, automated scoring, and computerized adaptive testing.

This book was published outside of NWEA. The full text can be found at the link above.

Related Topics

Exploring the educational impacts of COVID-19

This visualization was developed to provide state-level insights into how students performed on MAP Growth in the 2020–2021 school year. Assessments are one indicator, among many, of the student impact from COVID-19. Our goal with this tool is to create visible data that informs academic recovery efforts that will be necessary in the 2022 school year and beyond.

By: Greg King

Topics: COVID-19 & schools, Innovations in reporting & assessment

Executive Summary: Content proximity spring 2022 pilot study

This executive summary outlines results from the Content Proximity spring 2022 pilot study, including information on the validity, reliability, and test score comparability of MAP Growth assessments that leverage this new item-selection algorithm.

By: Patrick Meyer, Ann Hu, Xueming (Sylvia) Li

Products: MAP Growth

Topics: Computer adaptive testing, Innovations in reporting & assessment, Test design

Content Proximity Spring 2022 Pilot Study Research Report

The purpose of this research report is to provide detailed information about updates to the MAP Growth item-selection algorithm. This brief includes results from the Content Proximity pilot study, including information on the validity, reliability, and test score comparability of MAP Growth assessments that leverage this new item-selection algorithm.

By: Patrick Meyer, Ann Hu, Xueming (Sylvia) Li

Products: MAP Growth

Topics: Computer adaptive testing, Innovations in reporting & assessment, Test design

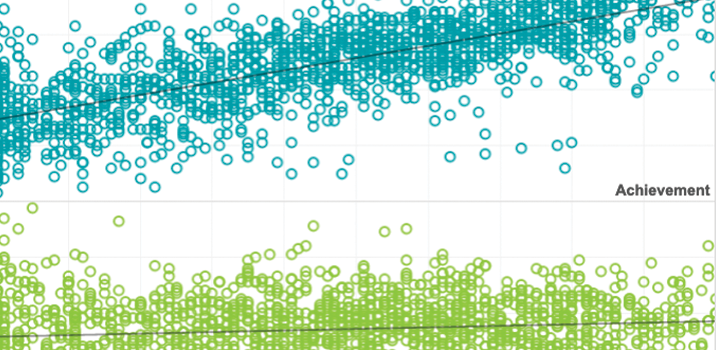

Achievement and Growth Norms for Course-Specific MAP Growth Tests

This report documents the procedure used to produce the achievement and growth user norms for a series of the course-specific MAP® Growth™ subject tests, including Algebra 1, Geometry, Algebra 2, Integrated Math I, Integrated Math II, Integrated Math III, and Biology/Life Science. Among these tests, Integrated Math I, Integrated Math II, Integrated Math III, and Biology/Life Science were the first time to have their norms available. The remaining tests, i.e., Algebra 1, Geometry, and Algebra 2, had their norms updated including receiving more between-term growth norms by using more recent test events. Procedure for norm sample selection and a model-based approach using the multivariate true score model (Thum & He, 2019) that factors out known imprecision of scores to generate the norms are also provided in detail, along with the snapshots of the achievement and growth norms for each test.

By: Wei He

Products: MAP Growth

Topics: Measurement & scaling

Longitudinal models of reading and mathematics achievement in deaf and hard of hearing students

New research using longitudinal data provides evidence that deaf and hard of hearing (DHH) students continue to build skills in math and reading throughout grades 2 to 8, challenging assumptions that DHH students’ skills plataeu in elementary grades.

By: Stephanie Cawthon, Johny Daniel, North Cooc, Ana Vielma

Topics: Equity, Measurement & scaling

Achievement and growth norms for English MAP Reading Fluency Foundational Skills

This report documents the norming study procedure used to produce the achievement and growth user norms for English MAP Reading Fluency Foundational Skills.

By: Wei He

Products: MAP Reading Fluency

Topics: Measurement & scaling

This study compared the test taking disengagement of students taking a remotely administered an adaptive interim assessment in spring 2020 with their disengagement on the assessment administered in-school during fall 2019.

By: Steven Wise, Megan Kuhfeld, John Cronin

Topics: Equity, Innovations in reporting & assessment, School & test engagement