Presentation

Parameter estimation accuracy of the effort-moderated IRT model under multiple assumption violations

September 2020

By: James Soland, Joseph Rios

Description

This session from the 2020 National Council on Measurement in Education virtual conference presents new research findings on understanding and managing test-taking disengagement.

Soland, J.& Rios, J. (2020, September). Parameter estimation accuracy of the effort-moderated IRT model under multiple assumption violations. National Council on Measurement in Education 2020 virtual conference.

See MoreRelated Topics

An investigation of examinee test-taking effort on a large-scale assessment

Most previous research involving the study of response times has been conducted using locally developed instruments. The purpose of the current study was to examine the amount of rapid-guessing behavior within a commercially available, low-stakes instrument.

By: Steven Wise, J. Carl Setzer, Jill R. van den Heuvel, Guangming Ling

Topics: Measurement & scaling, School & test engagement, Student growth & accountability policies

These studies are conducted based on assumptions under regular conditions for fixed test forms, such as no missing responses and normal distribution of unidimensional ability for a population.

By: Shudong Wang, Hong Jiao

Topics: Measurement & scaling, Computer adaptive testing, Item response theory

This study, using real data, provides empirical evidence of construct and invariance construct of MAP scales across grades at different academic calendars for 10 different states.

By: Shudong Wang, Marth S. McCall, Hong Jiao, Gregg Harris

Topics: Measurement & scaling, Test design

The current investigative study uses a multiple-indicator, latent-growth modelling (MLGM) approach to examine the longitudinal achievement construct and its invariance for MAP Growth.

By: Shudong Wang, Hong Jiao, Liru Zhang

Topics: Measurement & scaling, Growth modeling

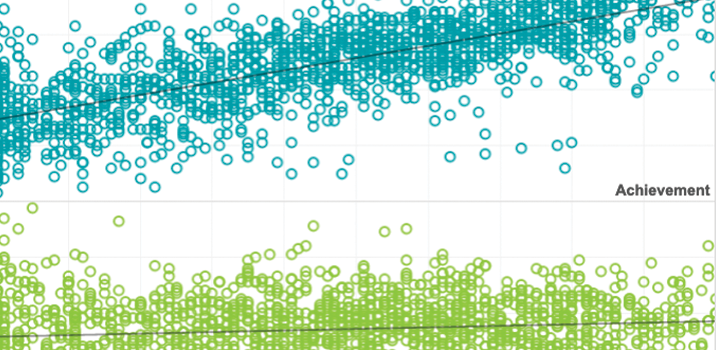

The potential of adaptive assessment

In this article, the authors explain how CAT provides a more precise, accurate picture of the achievement levels of both low-achieving and high-achieving students by adjusting questions as the testing goes along. The immediate, informative test results enable teachers to differentiate instruction to meet individual students’ current academic needs.

By: Edward Freeman

Topics: Innovations in reporting & assessment, Measurement & scaling, Student growth & accountability policies

The phantom collapse of student achievement in New York

When New York state released the first results of the exams under the Common Core State Standards, many wrongly believed that the results showed dramatic declines in student achievement. A closer look at the results showed that student achievement may have increased.

By: John Cronin, Nate Jensen

Topics: Measurement & scaling