Presentation

A cessation of measurement: Identifying test taker disengagement using response time

September 2020

Description

In this session from the National Council on Measurement in Education 2020 virtual conference (from 1:06:40 in video), Dr. Wise shares research insights into test disengagement and how disengagement should be managed in scoring.

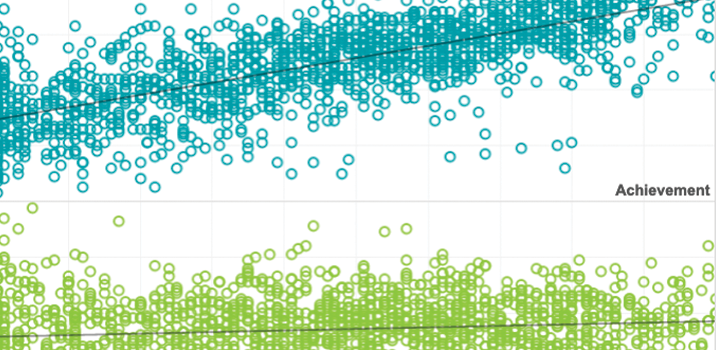

There is a long tradition of using achievement tests to assess an individual’s knowledge, skills, and abilities. During such tests, a series of items is administered to a test taker, and the responses to each of these items provide information that is aggregated to produce a test score. The validity of inferences made based on the resulting score depends, in part, on the assumption that each item response reflects the construct or domain of interest. The reality, however, is that test takers do sometimes disengage from their test and give responses that do not reflect their knowledge, skills, and abilities. When computer-based tests are used, such item responses can be identified as rapid-guessing behavior.

The presence of rapid guesses in test data invites the question of what to do about them. In this presentation, we contend that because rapid guesses do not reflect what a test taker knows and can do they do not contribute to measurement and therefore should be excluded from scoring. This assertion is based on belief that rapid guessing on an item represents a test taker’s choice to “opt out” of the measurement process. We provide evidence to support this assertion through both data analyses and previous research findings. Collectively, this evidence shows that rapid guesses reflect a distinctly different, construct-irrelevant responses process. Moreover, if rapid guesses are included in scoring, they introduce systematic measurement errors that can seriously distort scores.

See More