School & test engagement

Educators need accurate assessment data to help students learn. But when students rapid-guess or otherwise disengage on tests the validity of scores can be affected. Our research examines the causes of test disengagement, how it relates to students’ overall academic engagement, and its impacts on individual test scores. We look at its effects on aggregated metrics used for school and teacher evaluations, achievement gap studies, and more. This research also explores better ways to measure and improve engagement and to help ensure that test scores more accurately reflect what students know and can do.

When computer-based tests are used, disengagement can be detected through occurrences of rapid-guessing behavior. This empirical study investigated the impact of a new effort monitoring feature that can detect rapid guessing, as it occurs, and notify proctors that a test taker has become disengaged.

By: Steven Wise, Megan Kuhfeld, James Soland

Topics: Measurement & scaling, Innovations in reporting & assessment, School & test engagement

This paper briefly discusses the trade-offs involved in making such a transition, and then focuses on a relatively unexplored benefit of computer-based tests – the control of construct-irrelevant factors that can threaten test score validity.

By: Steven Wise

Topics: Measurement & scaling, Innovations in reporting & assessment, School & test engagement

Are test and academic disengagement related? Implications for measurement and practice

In this study, we examine whether behaviors indicative of academic disengagement like chronic absenteeism and course failures are related to behaviors indicative of test disengagement like rapidly guessing on items.

By: Emily Wolk, Sharon Bi, Tran Keys

Topics: High school, School & test engagement, Social-emotional learning

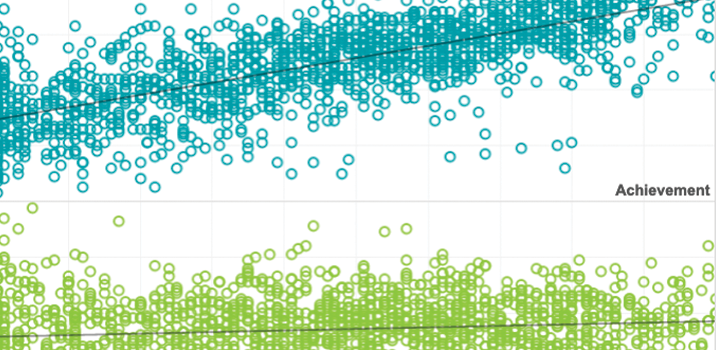

Achievement gaps are a metric of fundamental importance to U.S. practice and policy. Gap estimates are often used to measure the effectiveness and fairness of the education system at a given point in time, over the course of decades, and as children progress through school.

By: James Soland

Topics: Equity, School & test engagement, Student growth & accountability policies

This study examines whether test effort differs by student subgroup, including by race and gender. The sensitivity of achievement gap estimates to any differences in test effort is also considered.

By: James Soland

Topics: Equity, School & test engagement

In this article, we examined the prevalence of rapid guessing to determine if this behavior varied by grade, subject, and teacher, and evaluated if rapid guessing influenced teacher value-added estimates. We observed differences in rapid guessing across grades, subjects, and teachers; however, this behavior did not appear to have a substantive effect on teacher value-added estimates.

By: Andrew Rice

Topics: School & test engagement, Student growth & accountability policies

This dissertation for the University of Oregon examined the relationship between varying degrees of technology-enhancements applied in a mathematics performance task on the outcome of student cognitive engagement.

By: Meg Guerreiro

Topics: School & test engagement, Math & STEM