The new school year is well underway nationwide, and it’s worth pausing a moment to reflect on the investments school districts made in summer learning. To help students recover from large opportunity gaps, many districts bet on new or expanded summer programs. In a national survey from 2022, 70% of them reported providing new or expanded summer programming because of the pandemic. How well are those programs working? Are they enough to get students back on track?

A new study found that summer school programs in the study’s sample districts helped students make gains in math but not in reading. Moreover, because participation was far from universal, the gains made up just two to three percent of a district’s total learning loss in math, and none in reading.

This research is part of a unique and ongoing partnership between CALDER at the American Institutes for Research (AIR), the Center for Education Policy and Research (CEPR) at Harvard, and my organization, NWEA. Out of our 11 partner school districts, eight provided data on their summer 2022 programs. These eight districts collectively enroll approximately 400,000 students who are disproportionately Black, Hispanic, and/or low-income.

Our findings on the impacts of these summer programs largely come down to what readers can think of as the “dosage” of the summer school treatment. Student gains were broadly in line with what might have been expected given prior research and the amount of added instructional time students actually received. But this points to a clear lesson for policymakers: students who fell behind during the pandemic will need much more support to catch up.

About the study

To measure the “dosage” of summer programs, we looked at participation, attendance, and the numbers of instructional hours students actually received in the program. For the purposes of this study, we focused on programs that offered at least some formal academic support in math and/or ELA, either alone or in conjunction with other enrichment activities. That means we excluded programs that focused exclusively on enrichment activities. While our study was focused on academic outcomes, it would be useful for future research to study the effects these programs had on other non-academic outcomes.

The districts in our sample offered summer programs that were between 15 and 20 days long. They offered anywhere from 45 minutes up to two hours of daily academic instructional time in math and reading. All told, the number of academic instructional time ranged from 23 to 67 hours across the programs.

In the districts where we could observe attendance, the overall participation rates varied substantially, ranging from 5 to 23 percent. Across our full sample, about one out of eight eligible students (13 percent) enrolled in an academic summer program.

Students who participated in summer programs tended to score substantially lower on the MAP® Growth™ interim assessment. Participation rates were also higher for historically underserved student subgroups, including students receiving special education services, English language learners, economically disadvantaged students, and Black and Hispanic students.

The proportion of days students actually attended also varied across the districts, from 58 to 80 percent, with an average of 69 percent. Across the districts, this translates to students attending about 10 to 14 days of summer school. Accounting for attendance and 60 to 120 minutes of instruction per subject, students received approximately 14 to 27 hours of additional instructional time per subject.

Summer programs were effective in math, but not reading

Students who attended summer programs tended to make small but statistically significant improvements in math achievement, but they had no statistically significant gains on reading tests relative to similar peers who did not attend. Our results are important not only for adding additional evidence to the use of summer programs to improve student learning but also to explain whether these programs can make noticeable headway addressing COVID-19 learning loss.

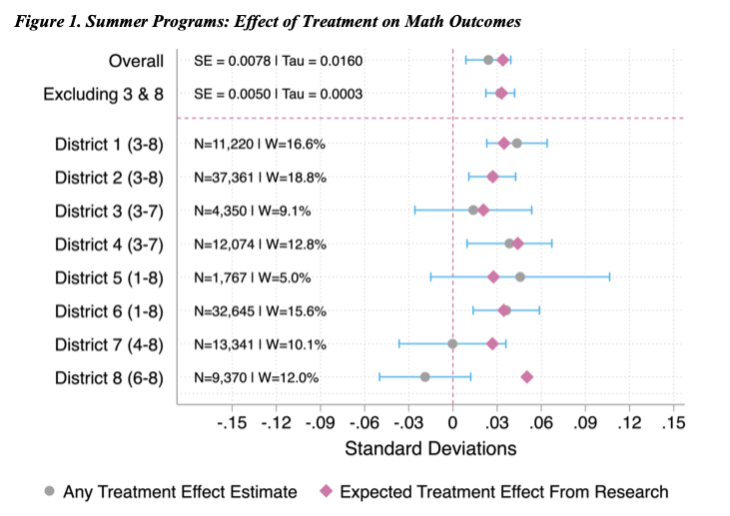

The positive effects we observe in math are not far off from what would have been expected from research on pre-pandemic estimates of the impact of summer school attendance. These estimates also account for the number of hours of instruction students actually received.

Our district results came out remarkably close to what might have been expected. The chart below (Figure 1 in our paper) compares the gains on math test scores that might have been expected given prior research (the red diamonds) versus the actual gains (in gray). While the estimates across districts vary, outside of Districts 7 and 8, all are positive for math test scores and quite close to the estimated effects.

In other words, students tended to benefit from the added instructional time in math. But the gains were relatively small, and that’s almost entirely a function of the “dosage” of the summer programs. Without giving students more added instructional time, we shouldn’t expect them to make much larger gains absent some dramatic improvements in the quality of instruction they receive.

The results were less positive in reading, where the overall effects were indistinguishable from zero. We speculate that one reason it may be more difficult to achieve reading gains than math gains for summer school participants relative to non-participants is because non-participants may also practice reading over the summer but may be less likely to practice math. This explanation would align with evidence that shows larger effects of school inputs on math achievement than reading.

The road to recovery remains large

Based on the latest NWEA research, students nationally remain far behind where their peers were before the pandemic. The average eighth-grade student, for example, was the equivalent of nine months behind in math and seven months behind in reading at the end of the 2022–23 school year.

There are some potential kernels of good news in this new report. We found that adding additional days of programming (with the same or more instructional time) resulted in additional, proportional gains for students, at least in math. The gains were also consistent across different student subgroups, suggesting that, when summer program space is limited, increasing the targeted recruitment and attendance of students who would most benefit may be an effective strategy for boosting achievement among students with the greatest academic needs. This is a particularly important finding given prior research showing that students with disabilities and English learners tend to suffer larger academic losses during the summer than their peers. Expanding summer school offerings may help address these gaps.

Notably, one of our sample districts highlights a potential path forward to boost participation rates in summer programs. It offered extended operating hours, provided childcare for working parents, and framed its summer programs as “summer camp”—an exciting learning and enrichment program—as opposed “summer school.” Although not definitive, the district that structured its summer programs this way had the highest participation rate in our sample.

That said, as we conclude in the paper, our findings underscore the need for a continued commitment from policymakers at all levels to deliver recovery interventions at the scale and intensity needed to address the pandemic’s academic impact. Failing to do so will have dire consequences for students and for our wider society.