I remember watching the Olympics as a little girl and being so excited by all the flips and jumps in a gymnastics floor routine. I couldn’t wait to see the overall score for each athlete given at the end.

I remember watching the Olympics as a little girl and being so excited by all the flips and jumps in a gymnastics floor routine. I couldn’t wait to see the overall score for each athlete given at the end.

Although reading is different from a sport, kids are using many different skills when they first learn routines in phonological awareness and phonics in particular, whether they’re clapping syllables in words or substituting one phoneme for another to create a new word. Some of these skills require a lot of thinking and practice. It’s like kids are doing flips and twists with individual sounds to make them into words.

These foundational skills are interrelated as children move through the continuum, strengthening their ability in the overall domain of phonological awareness and phonics. How can we tell if a student is improving these skills over time? With progress monitoring in MAP® Reading Fluency™.

Sequence matters: The mental gymnastics involved in learning to read

Think of a student’s skills acquisition in reading as mental gymnastics. Learning the 44 phonemes made up from our 26 letters, as it turns out, requires skill and practice. We would not naturally learn to read if left alone in the world with a book. We have to be taught, and it all begins with sound.

Young readers need to be able to identify and delete a sound in a word before they can substitute it for another. Being aware of the order skills need to be learned in can help us appreciate the difficulty involved. Just like learning one movement at a time in a gymnastics routine eventually leads to a flash of a body moving and twisting in the air, once a child knows to identify the beginning sound in a word, they are on their way to the grand skill of substituting one sound for another.

Take changing the word “grin” to “grain,” for example. First, we have to identify the sound to change: /i/. Then we have to remember what sound is going to replace that identified sound (/a/), and we have to be able to delete the first sound and replace it with the second one. Once we’ve done all that, we have to be able to blend the sounds together (/g/ /r/ /a/ /n/), a skill learned earlier, to form the new word: “grain.”

If I am working with a child every day, I know how they are progressing on these individual skills. What I don’t know is how well they are doing compared to their peers and in the domain overall, which is critical. That’s where progress monitoring in MAP Reading Fluency comes in.

Scoring a perfect 10 isn’t the goal of progress monitoring

The primary goal of progress monitoring is answering the question, Is my intervention working to catch this student up?

In the foundational skills domains, our aim is to help young readers build their mental muscles so they can quickly move from one skill they’ve previously learned to another. The idea is that when students start decoding words, they have already manipulated sounds so much that breaking a word into its component sounds through letter patterns is natural and automatic.

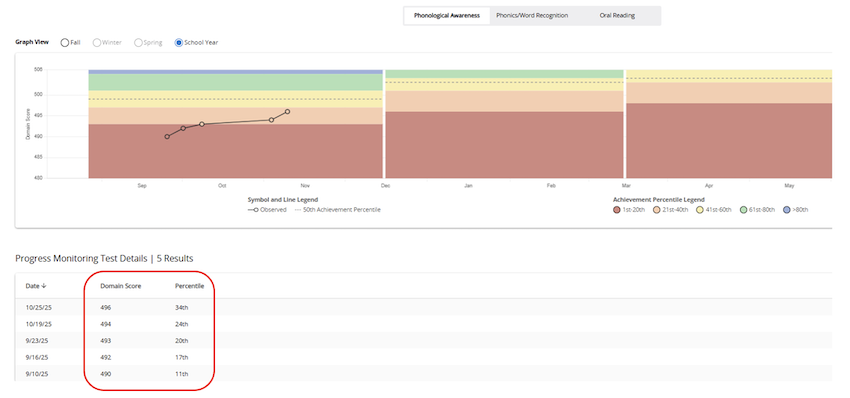

The progress monitoring in MAP Reading Fluency allows teachers to see a domain score and understand how well a student compares to their peers through an overall domain score and a percentile. The sample from MAP Reading Fluency reporting below shows how a teacher can see the results from multiple testing events as well as how a student’s skills have changed over time.

Depending on how fast a student is growing, a good guideline is to aim for the fiftieth percentile of achievement as an initial goal. This is shown as the dotted line within the yellow bar. Once a student establishes a trend line after a few progress monitoring sessions, a goal can be further refined and can be based on a combination of growth and achievement percentiles to create a reasonable target to aim for. Being able to see how the average student is achieving lends some perspective to the level of intensity of intervention to plan for a student.

Depending on how fast a student is growing, a good guideline is to aim for the fiftieth percentile of achievement as an initial goal. This is shown as the dotted line within the yellow bar. Once a student establishes a trend line after a few progress monitoring sessions, a goal can be further refined and can be based on a combination of growth and achievement percentiles to create a reasonable target to aim for. Being able to see how the average student is achieving lends some perspective to the level of intensity of intervention to plan for a student.

As a teacher, I already know the skills my students are working on and how well they’re doing in mastering them. What I don’t know is how well they’re performing on the “mat,” that is, their overall score in the domain and how they’re comparing to their peers, those other contenders at the gymnastics meet. MAP Reading Fluency supports me in uncovering that and giving each student the instruction they need most.

In this sample, I can clearly see the student is moving in the right direction. The data I get for each student can help me decide if I need to change an intervention to encourage progress more steeply toward that dotted line, or if a student is firmly on track to get there by the end of the term or school year.

MAP Reading Fluency gives teachers perspective

Remember, if I am working intensively with a child several times a week, I know if they can blend words (for example, /c/ /a/ /t/ to “cat”) or segment them (for example, “tub” to /t/ /u/ /b/). I can also tell if they’re having trouble with substituting phonemes (for example, going from “fan” to “fin”). What I need to know is how they’re growing overall as a “gymnast” in their manipulation of sounds in words and if they’re performing like the average first or second grader in their percentile score. That gives me perspective.

I need perspective as a teacher of many students. I need to know if every student is catching up overall across the domain. After all, the gymnast isn’t getting a score for each individual skill, but one overall score for their performance.

The confidence to meet the needs of every student

A gymnastics floor routine may have an outstanding jump or flip, but the athlete may lack skills in footwork or style. Looking at the bigger picture of performance is similarly important in reading instruction.

Progress monitoring in MAP Reading Fluency asks students questions to assess a variety of skills, and it helps me, the teacher, understand if my interventions have truly supported growth overall. If I have a score in every unique skill, like blending four phonemes to make the word “skip” or substituting phonemes to go from “hut” to “hat,” I may have a lot of detail on what a student knows, but it’s unlikely that I’ll have a clear understanding of how well they are doing overall. Without progress monitoring, measuring each unique skill would be a longer process and would take away the instructional time the child needs to catch up.

We may not be aiming for an Olympic gold medal when we help students develop foundational reading skills, but we are aiming to make sure all kids can perform as expected in the twists and turns required in phonological awareness and phonics. Domain scores and norms available through progress monitoring in MAP Reading Fluency can help us make sure each student lands solidly on the mat after complex routines of sounds and letter patterns in phonological awareness and phonics.